Check Point® Software Technologies Ltd.(link is external) announced that U.S. News & World Report has named the company among its 2025-2026 list of Best Companies to Work For(link is external).

Confirmed frequently by industry surveys and reports, software testing is still a bottleneck, even after the implementation of modern development processes like Agile, DevOps, and Continuous Integration/Deployment. In some cases, software teams aren't testing nearly enough and have to deal with bugs and security vulnerabilities at the later stages of the development cycle, which creates a false assumption that these new processes can't deliver on their promise. One solution to certain classes of issues is shift right testing, which relies on monitoring the application in a production environment, but it requires a rock solid infrastructure to roll back new changes if a critical defect arises.

As a result, organizations are still missing deadlines, and quality and security is suffering. But there's a better way! To test smarter, organizations are using technology called test impact analysis to understand exactly what to test. This data-driven approach supports both shift left and right testing.

Agile and DevOps and the Testing Bottleneck

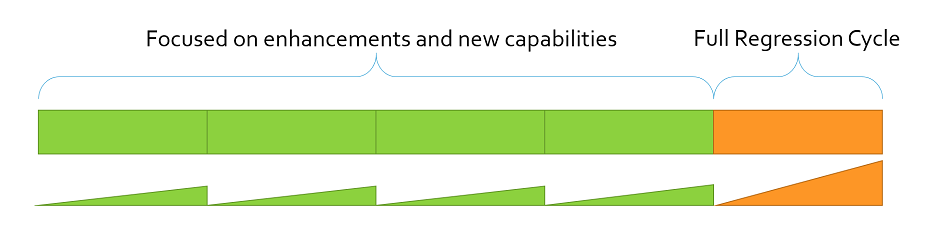

Testing in any iterative process is a compromise of how much testing can be done in a limited cycle time. In most projects, it's impossible to do a full regression on each iteration. Instead, a limited set of testing is performed, and exactly what to test is based on best guesses. Testing is also back-loaded in the cycle since there isn't usually enough completed new features to test. The resulting effort vs. time graph ends up like a saw tooth, as shown below. In each cycle only a limited set of tests are executed until the final cycle where a full regression test is performed.

Figure 1: Agile processes result in a "saw tooth" of testing activity. Only the full regression cycle is able to do a "complete" test.

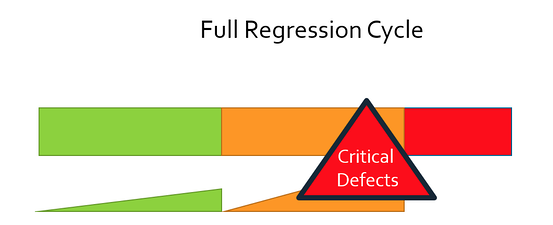

Unfortunately, no project reaches the final cycle with zero bugs and zero security vulnerabilities. Finding defects at this stage adds delays as bugs are fixed and retested. And even with those delays and all, many bugs still make their way into the deployed product, as illustrated below.

Figure 2: Integration and full regression testing is never error free. Late stage defects cause schedule and cost overruns.

This situation has resulted in the adoption of what has been coined "shift-right testing," in which organizations continue to test their application into the deployment phase. The intention of shift-right testing is to augment and extend testing efforts, with testing best-suited in the deployment phase such as API monitoring, toggling features in production, retrieving feedback from real life operation.

Shift Right Testing

The difficulties in reproducing realistic test environments and using real data and traffic in testing led teams to using production environments to monitor and test their applications. There are benefits(link is external) to this, for example, being able to test applications with live production traffic supporting fault tolerance and performance improvements. A common use case is the so-called canary release(link is external), in which a new version of the software is released to a small subset of customers first, and then rolled out to an increasingly larger group as bugs are reported and fixed. Roku, for example, does this for updating their device firmware.

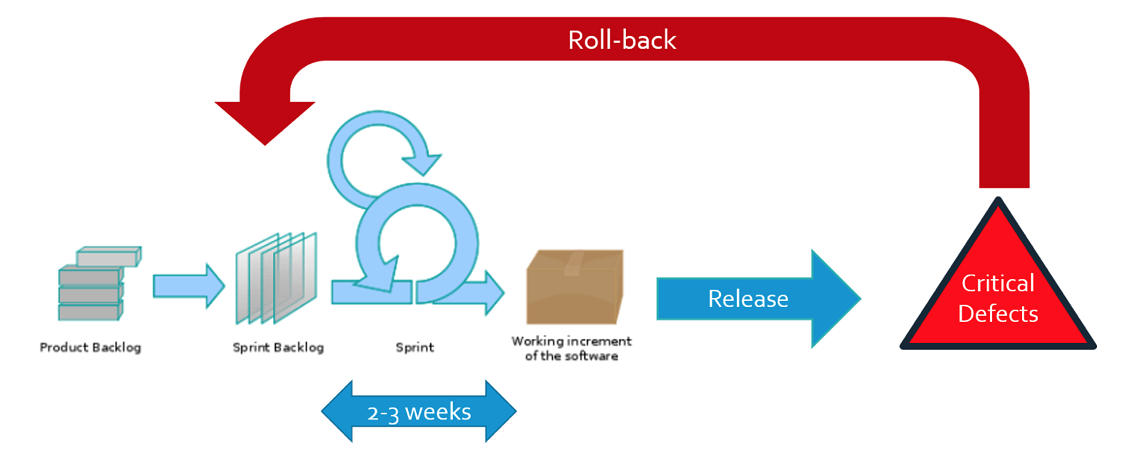

Shift-right testing relies on a development infrastructure that can roll back a release in the event of critical defects. For example, a severe security vulnerability in a canary release means rolling back the release until a new updated release is ready, as you can see in the illustration here:

Figure 3: Shift right testing relies on solid development operations infrastructure to roll back releases in the face of critical defects.

But there are risks to using production environments to monitor and test software, and of course, the intention of shift-right testing was never to replace unit, API and UI testing practices before deployment! Shift-right testing is a complementarypractice, that extends the philosophy of continuous testing into production. Despite this, organizations can easily abuse the concept to justify doing even less unit and API testing during development. In order to prevent this, we need to make testing during development phases to be easier, more productive and produce better quality software.

Read Part 2: Testing Smarter, Not Harder, by Focusing Your Testing

Industry News

Postman announced new capabilities that make it dramatically easier to design, test, deploy, and monitor AI agents and the APIs they rely on.

Opsera announced the expansion of its partnership with Databricks.

Postman announced Agent Mode, an AI-native assistant that delivers real productivity gains across the entire API lifecycle.

Progress Software announced the Q2 2025 release of Progress® Telerik® and Progress® Kendo UI®, the .NET and JavaScript UI libraries for modern application development.

Voltage Park announced the launch of its managed Kubernetes service.

Cobalt announced a set of powerful product enhancements within the Cobalt Offensive Security Platform aimed at helping customers scale security testing with greater clarity, automation, and control.

LambdaTest announced its partnership with Assembla, a cloud-based platform for version control and project management.

Salt Security unveiled Salt Illuminate, a platform that redefines how organizations adopt API security.

Workday announced a new unified, AI developer toolset to bring the power of Workday Illuminate directly into the hands of customer and partner developers, enabling them to easily customize and connect AI apps and agents on the Workday platform.

Pegasystems introduced Pega Agentic Process Fabric™, a service that orchestrates all AI agents and systems across an open agentic network for more reliable and accurate automation.

Fivetran announced that its Connector SDK now supports custom connectors for any data source.

Copado announced that Copado Robotic Testing is available in AWS Marketplace, a digital catalog with thousands of software listings from independent software vendors that make it easy to find, test, buy, and deploy software that runs on Amazon Web Services (AWS).

Check Point® Software Technologies Ltd.(link is external) announced major advancements to its family of Quantum Force Security Gateways(link is external).

Sauce Labs announced the general availability of iOS 18 testing on its Virtual Device Cloud (VDC).